Elasticsearch has native integrations with the industry-leading Gen AI tools and providers. Check out our webinars on going Beyond RAG Basics, or building prod-ready apps with the Elastic vector database.

To build the best search solutions for your use case, start a free cloud trial or try Elastic on your local machine now.

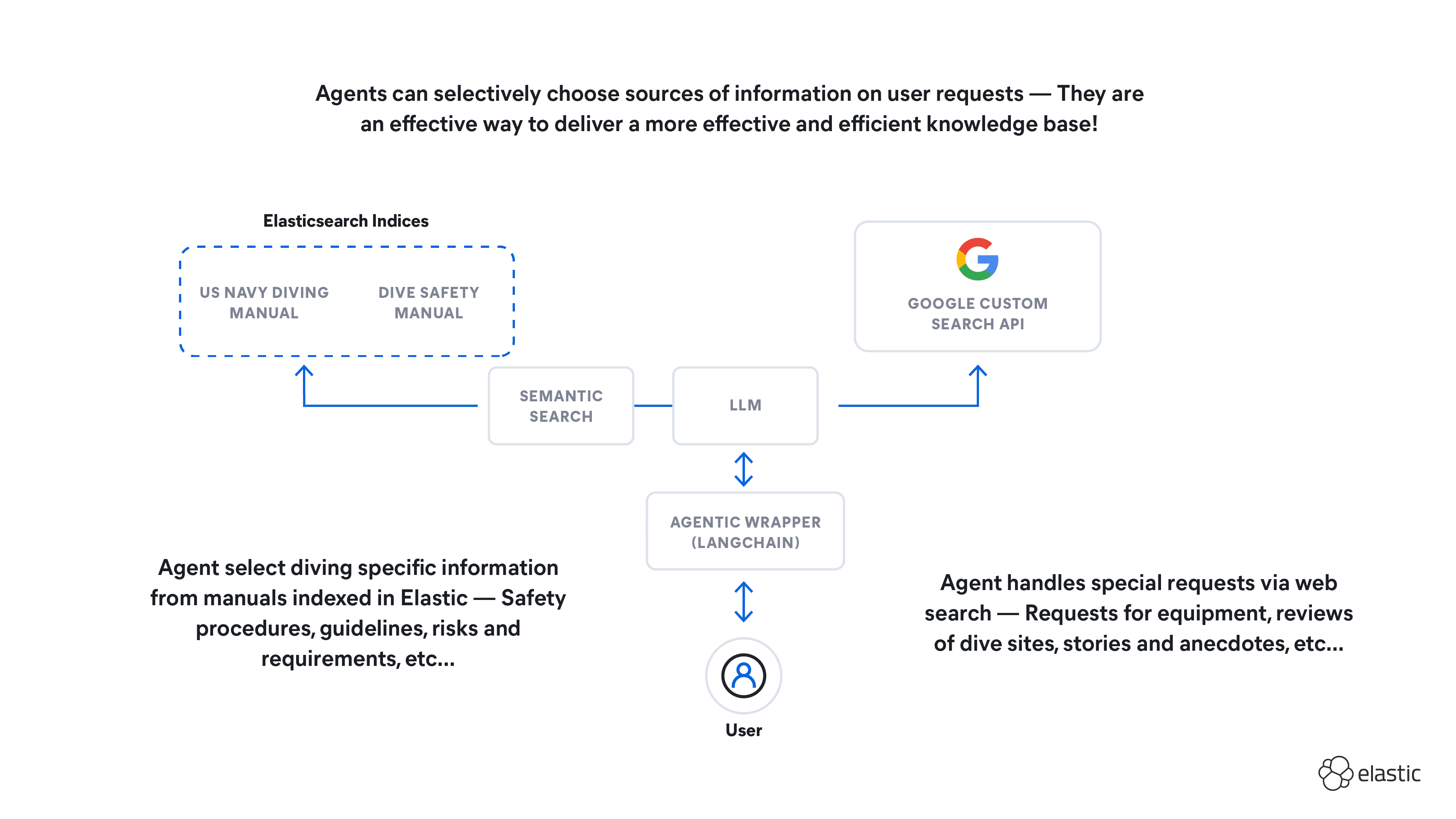

In this blog, we will connect Elasticsearch to Google’s Gemini 1.5 chat model using Elastic’s Playground and Vertex AI API. The addition of Gemini models to Playground enables Google Cloud developers to quickly ground LLMs, test retrieval, tune chunking, and ship gen AI search apps to prod with Elastic.

You will need an Elasticsearch cluster up and running. We will use a Serverless Project on Elastic Cloud. If you don’t have an account, you can sign up for a free trial.

You will also need a Google Cloud account with Vertex AI Enabled. If you don’t have a Google Cloud account, you can sign up for a free trial.

Steps to create RAG apps with Vertex AI Gemini models & Playground

1. Configuring Vertex AI

First, we will configure a Vertex AI service account, which will allow us to make API calls securely from Elasticsearch to the Gemini model. You can follow the detailed instructions on Google Cloud’s doc page here, but we will cover the main points.

Go to the Create Service Account section of the Google Cloud console. There, select the project which has Vertex AI enabled.

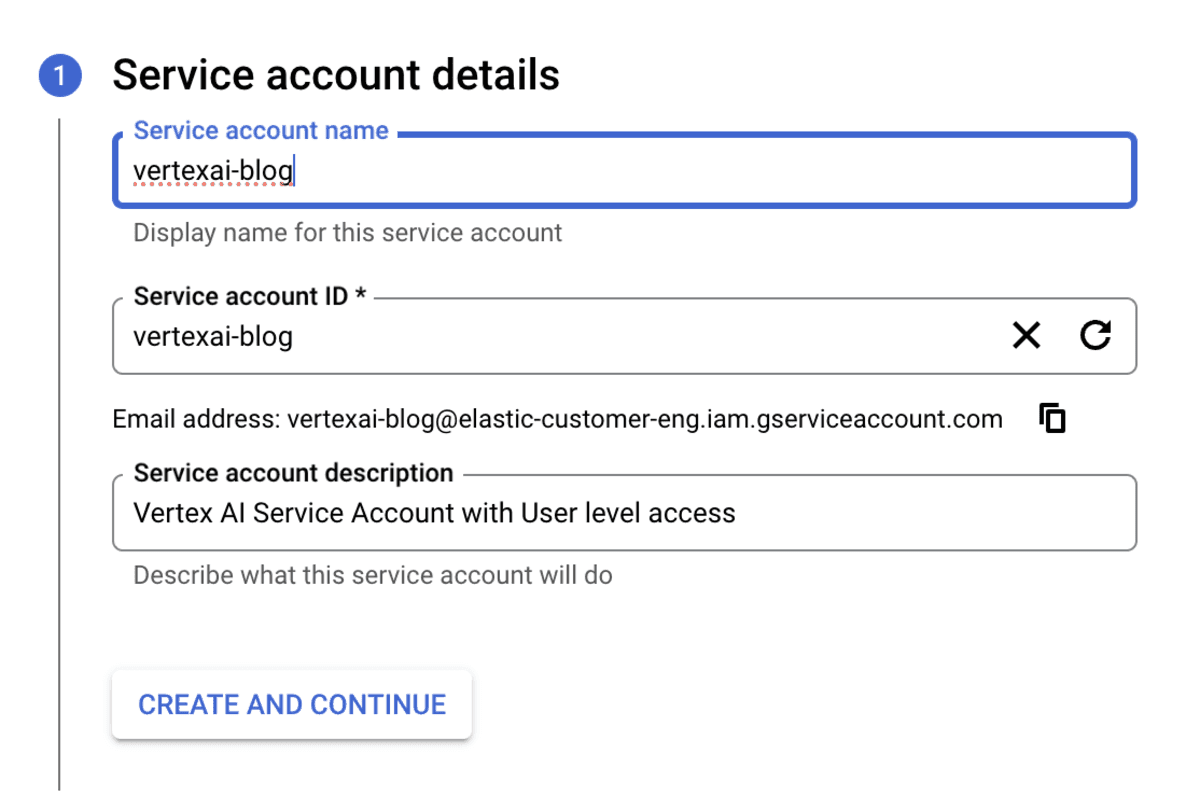

Next, give your service account a name and optionally, a description. Click “Create and Continue”.

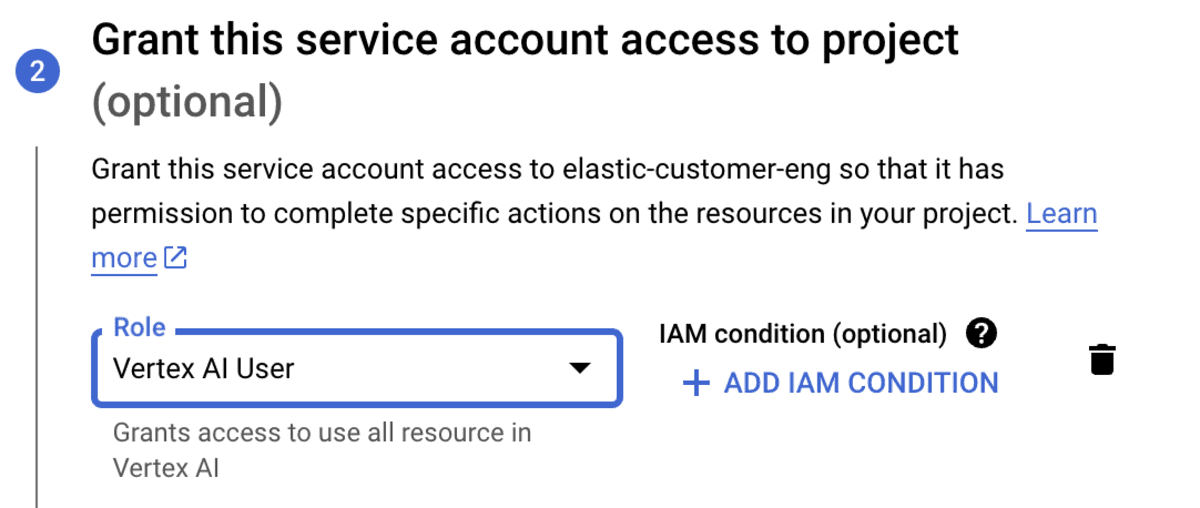

Set the access controls for your project. For this blog, we used the “Vertex AI User” role, but you need to ensure your access controls are appropriate for your project and account.

Click Done.

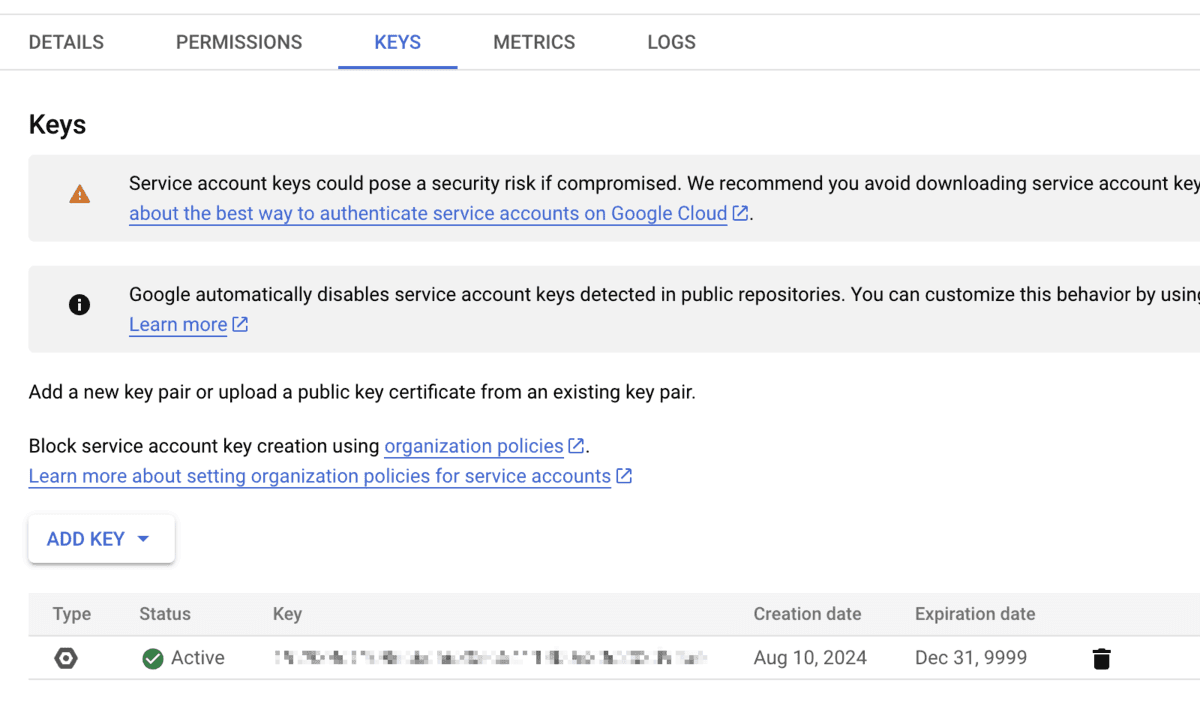

The final setup in Google Cloud is to create an API key for the service account and download it in JSON format.

Click “KEYS” in your service account then “ADD KEY” and “Create New”.

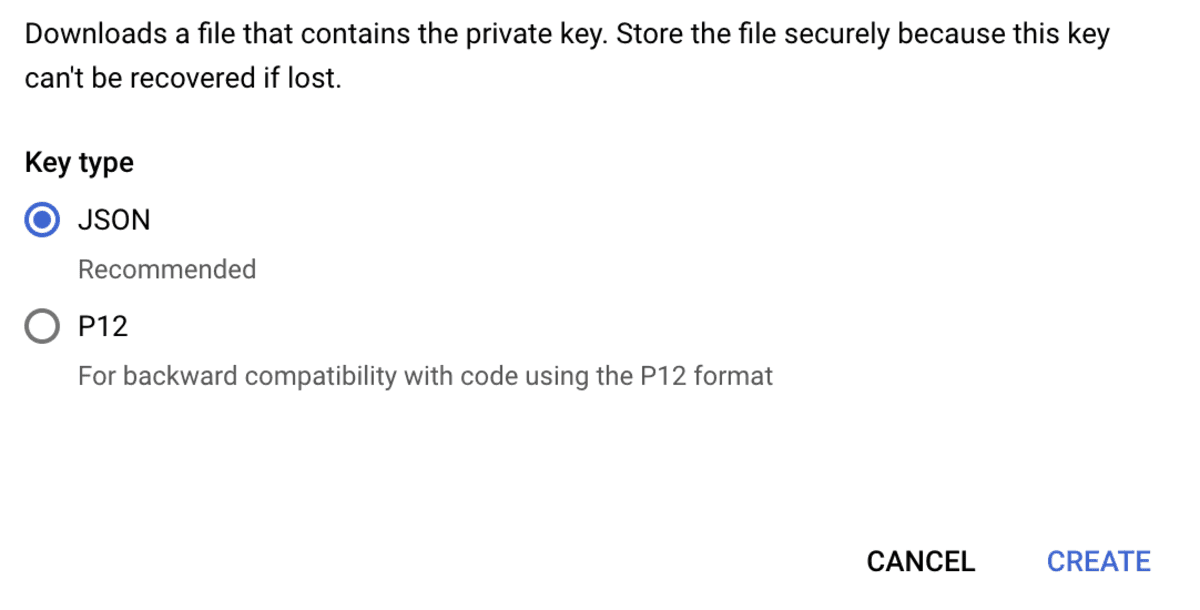

Ensure you select “json” as the key type then click “CREATE”.

The key will be created and automatically downloaded to your computer. We will need this key in the next section.

2. Connect to your LLM from Playground

With Google Cloud configured, we can continue configuring the Gemini LLM connection in Elastic’s Playground.

This blog assumes you already have data in Elasticsearch you want to use with Playground. If not, follow the Search Labs Blog Playground: Experiment with RAG applications with Elasticsearch in minutes to get started.

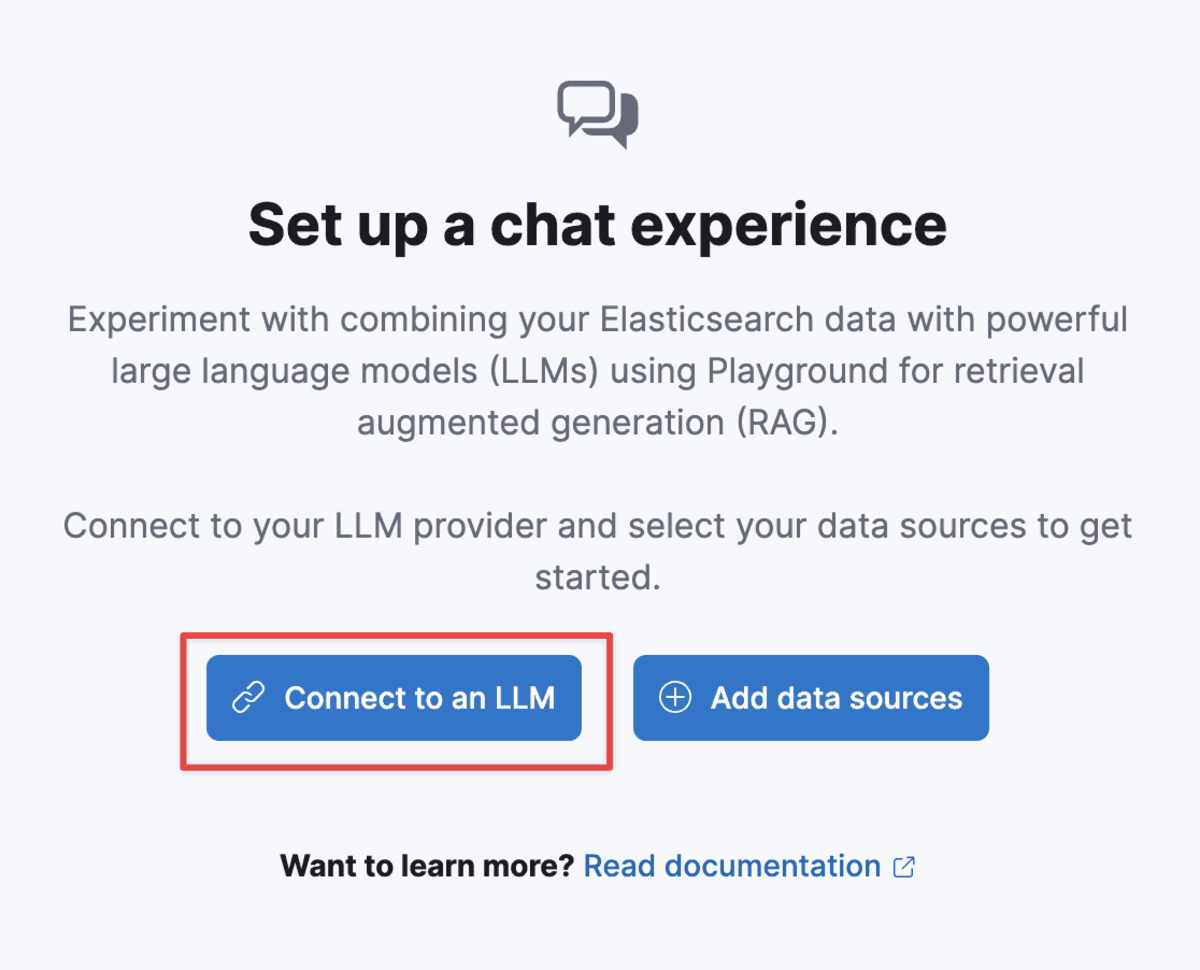

In Kibana, Select Playground from the side navigation menu. In Serverless, this is under the “Build” heading. When that opens for the first time, you can select “Connect to an LLM”.

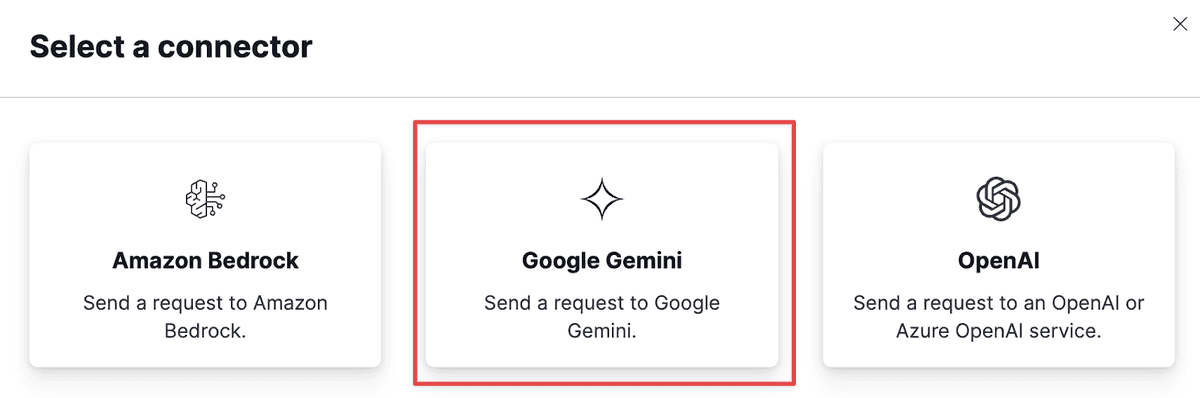

Select “Google Gemini”:

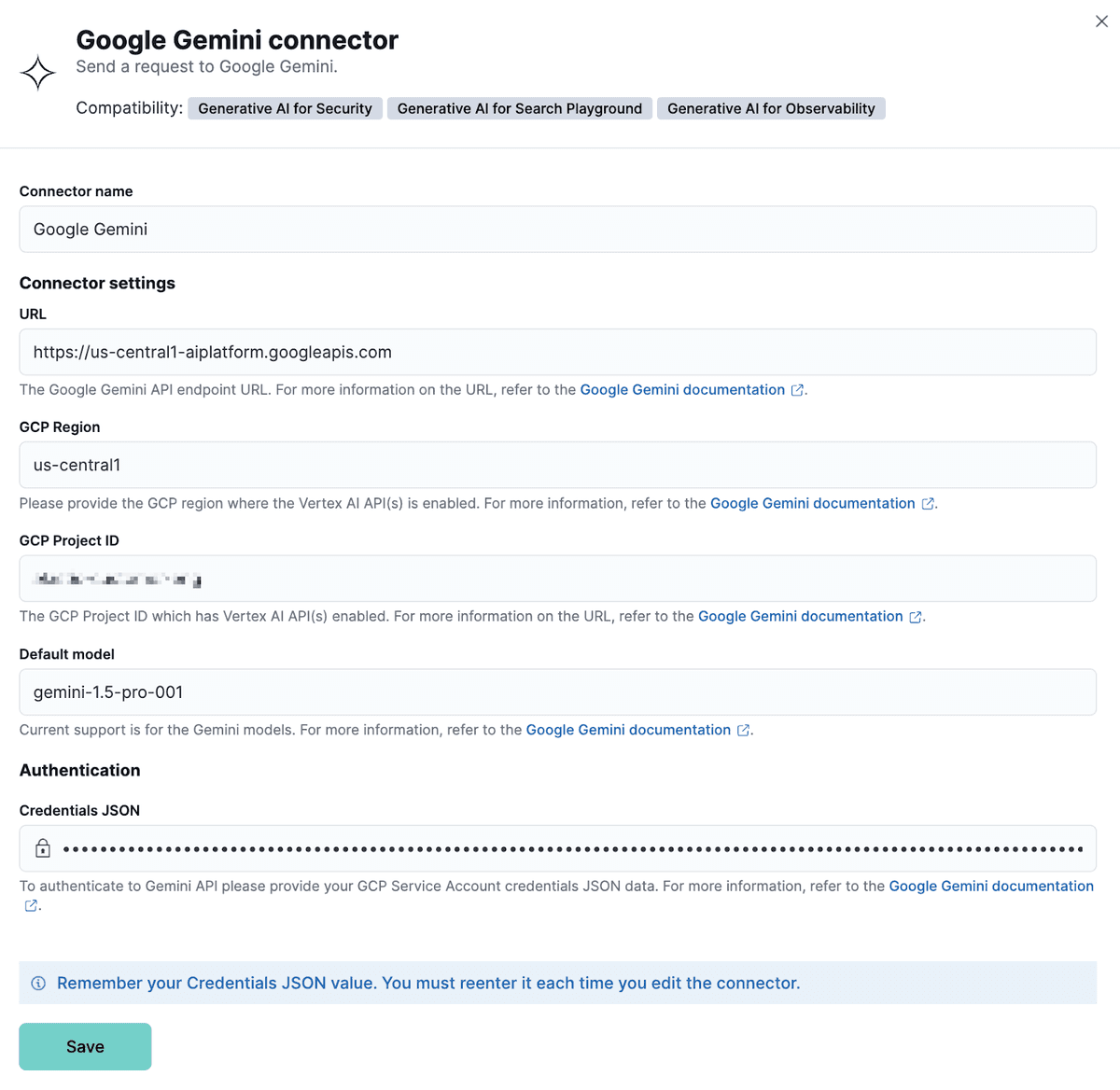

Fill out the form to complete the configuration.

Open the JSON credentials file created and downloaded from the previous section, copy the complete JSON, and paste it into the “Credentials JSON” section. Then click “Save”

3. It’s Playground Time!

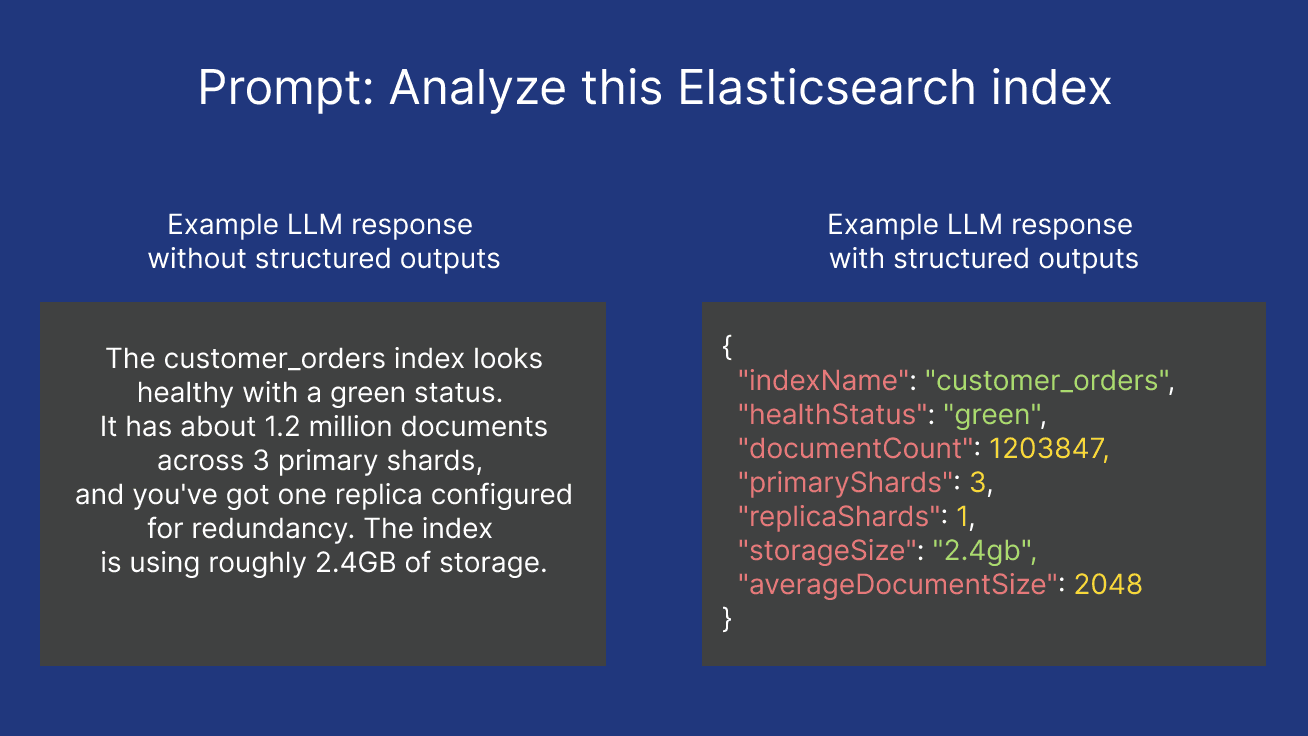

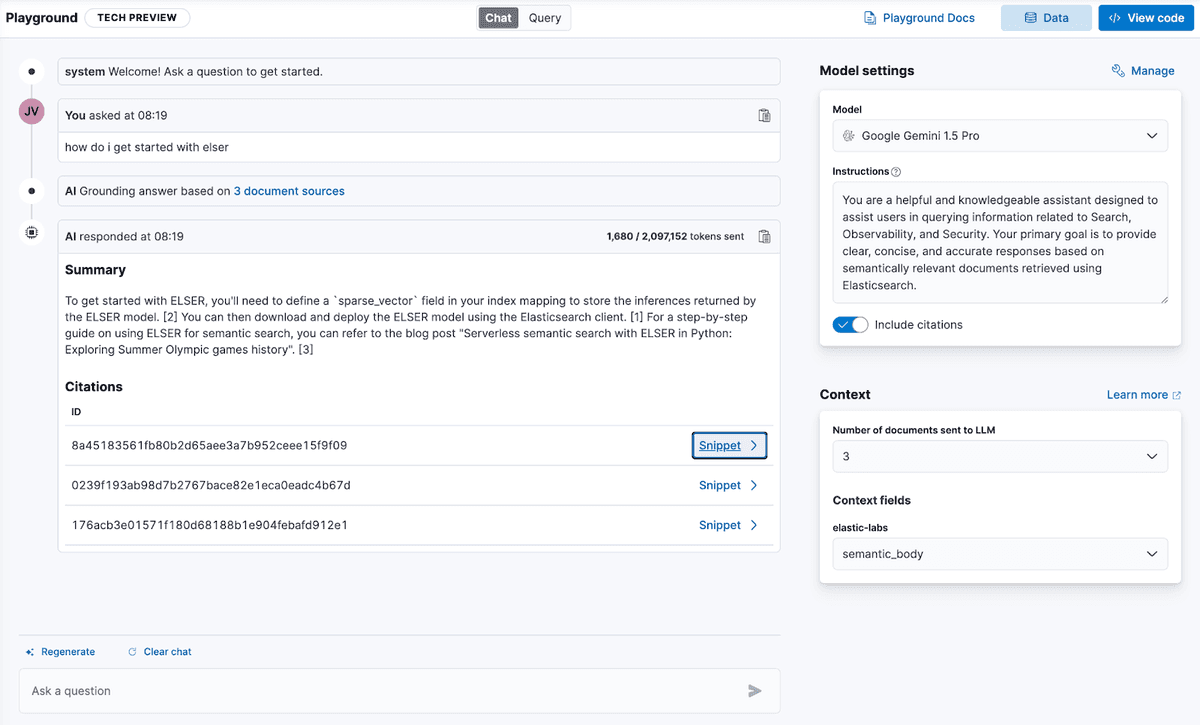

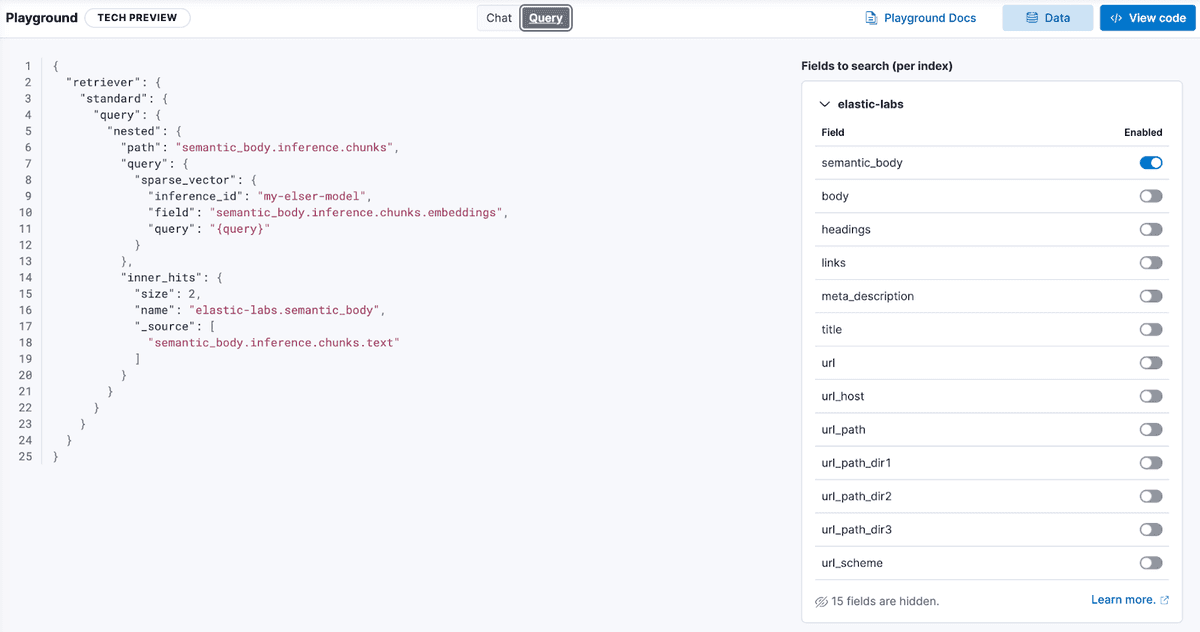

Elastic’s Playground allows you to experiment with RAG context settings and system prompts before integrating into full code.

By changing settings while chatting with the model, you can see which settings will provide the optimal responses for your application.

Additionally, configure which fields in your Elasticsearch data are searched to add context to your chat completion request. Adding context will help ground the model and provide more accurate responses.

This step uses Elastic’s ELSER sparse embeddings model, available built-in, for retrieving context via semantic search, that is passed on to the Gemini model.

That’s it (for now)

Conversational search is an exciting area where powerful large language models, such as those offered by Google Vertex AI are being used by developers to build new experiences. Playground simplifies the the process of prototyping and tuning, enabling you to ship your apps more quickly.

Explore more ideas to build with Elasticsearch and Google Vertex AI, and happy searching!